Better Weather Predications Using Artificial Intelligence, Machine Learning, and Artificial Neural Networks

Abstract

This paper outlined some of the current capabilities and advancements that Artificial Intelligence, Machine Learning, and ding of the weather. More specifically, how Artificial Intelligence (AI), Machine Learning (ML), and Artificial Neural Networks (ANNs) are leveraged to better forecast the weather. This paper focused on how these technologies have helped to take in more amounts of data and make more accurate predictions of things like, thunderstorms, snowstorms, hurricanes and tropical storms, tornados, heatwaves, and many others. This paper talked about what the parameters that are within data forecasting and climate forecasting. This paper looked at some key areas with data concerns including, data volume, data variety, data velocity, data variability, data veracity, and data complexity. This paper also described some of the approaches and training with the data. It covered some technologies that currently exist and how these technologies can change the speed of which weather predictions are now being made. Two companies and their platforms included Amazon Web Servers (AWS): Mini-AWS, and Microsoft Azure: D3 Express and Advanced. Lastly it looked at different types of Neural Networks and some techniques associated with weather predictions.

Introduction

Artificial intelligence, machine learning, and artificial neural networks have been hot topics in computer science for some time now. Since the introduction of digital assistants such as, Google Assistant, Siri, and Amazon Echo, society has become used to having a machine better provide answers at the drop of a dime. This has helped to make for better informed decisions without having to wonder if the information being provided is accurate. Society has begun to blindly trust in these devices, which is not necessarily a bad thing. However, it just leaves the question of how far can these technologies grow? Weather forecasting has been around for some time now, which helps society plan for their days. Utilizing artificial intelligence, machine learning, and artificial neural networks can help to more accurately predict the weather. This leads to some of the advancements these technologies have brought to the weather industry and will further do so.

Weather forecasting is the application of science and technology to predict the state of the atmosphere for a given location [3]. Weather forecasts are made by collecting quantitative data about the current state of the atmosphere and using scientific understanding of atmospheric processes to project how the atmosphere will evolve [3]. Weather models use mathematical equations to analyze and predict atmospheric processes and changes [6]. Weather models use a simplified topographic map.

For example, sometimes the resolution of weather models use a grid size of 12 km. This means that each point on the model represents an area of 144 km2 (12 km x 12 km) or larger. If the grid size was smaller, then there would be more data points. For example, imagine a 10 km x 10 km grid for an area of 100 km2. This gives a more accurate model of what will happen [6].

Figure 1. Resolution showing a horizontal grid spacing of 2.5 km (left), 7.5 km (middle) and 80 km (right) (Finnish Meteorological Institute, 2009. Finnish Wind Atlas [13.6.2019]) [6].

Since the surge of big data in recent years, meteorologists have been seeking to harness said big data and the best of numerical methods to improve forecasts [4]. So much so that the first problem on the first operational computer, the Electronic Numerical Integrator and Computer (ENIAC) at Aberdeen Proving Grounds in 1950 was a filtered version of these equations set up by Jules Charney, John von Neumann, and R. Fjortoft. This led to meteorologists' love of computing while using and producing big data [4].

Since then, the details included in the numerical models, as well as the spatial and temporal resolution employed have grown rapidly. This has become continuously challenging computing capability and at the same time, the field has been quick to employ advanced statistical and computational intelligence methods [4]. The importance of better algorithms and the adoption of machine learning has become much more abundant. Current weather prediction efforts are an important real-time challenge and many applications rely on its accuracy, including aviation safety, defense planning, energy applications and beyond [4]. Better predicting weather forecasts such as, Hurricanes, Floods, Tornados, Thunderstorms, Snowstorms, Droughts, and even Heat Waves, have helped to prevent causalities.

Artificial intelligence and machine learning have further helped to build better climate modeling and measure the movement of tectonic plates, carbon cycle, and water cycle, which has helped to better predict earthquakes and their aftershocks, and even potential tsunamis. It has allowed for city mapping to prevent wildfires and further allow scientists to better understand and potentially even slow down climate change. Because there are no real ethical concerns of the data being collected from weather, tools like, Amazon Web Services (AWS) and Microsoft Azure have tons of data at their fingertips. Even weather giants like, AccuWeather have converted to pre-build algorithms that continue to get better over time.

Finally, the best predictions blend as much data, models, and methods as possible via post-processing to bring together information from multiple sources in order to improve the deterministic forecast as well as to quantify its uncertainty [4]. Of course, training these post-processing methods requires a large amount of both model and observational data and will be discussed in more detail below. Some of the best methods involve rich data mining techniques that blend computational intelligence with knowledge of the physics and dynamics of the system [4].

THE PROBLEM

One of the issues with weather forecasting has been, how much data is being used to analyze weather predictions. Although the resolution of numerical weather prediction models continues to improve, many of the processes that influence things like precipitation, are still not captured adequately by the scales of present operational models, and consequently precipitation forecasts have not yet reached the level of accuracy needed for hydrologic forecasting [5]. Post-processing of model output to account for local differences can enhance the accuracy and usefulness of these forecasts [5]. Quantitative precipitation forecasts (QPFs) are important for applications ranging from flash flood forecasting to long-term agricultural and water resource management. Yet accurately predicting precipitation is one of the most difficult tasks in meteorology. This is due to some factors including, the amount of available data, the time available to analyze it, and the complexity of weather events [6].

While enhancements to the resolution and physics of numerical weather prediction (NWP) models have significantly increased, the accuracy of forecasts of many meteorological variables in recent years, similar improvements in the accuracy of precipitation forecasts have not been achieved. This is due to the physical complexity of precipitation processes, and because the small temporal and spatial scales involved in such processes cannot be resolved by the numerical models [5].

Weather forecasting relies on a huge data collection network, and within this network includes, land-based weather stations, weather balloons, and weather satellites [6]. Additionally, data from offshore buoys and ships operating at sea are used. Together, they create an observational network of data and after entering this data, generates computer models [6]. Limits in available computing power constrain the resolution and physical detail that can be used in a model.

One approach for bridging the gap between the model resolution and the desired forecast scale is to downscale the NWP model output by relating it to observations at specific locations of interest, that is, to “localize” NWP model output. The most widely used example of this is Model Output Statistics (MOS) and in the context of MOS, multiple linear regression is used to relate temperature, cloud cover, probability of precipitation, and other variables of interest at specific locations to NWP model output [5]. This is a historic dataset and these derived relationships are in turn used to predict the variables from real-time NWP model output [5].

In addition to linear regression, some other data analysis techniques can be used to relate NWP model output to observed variables of interest. These techniques include, generalized additive models and self-learning algorithms such as, abductive machine learning and goal-oriented pattern detection [5]. Artificial neural networks are another approach that has been applied to the prediction of meteorological variables, including tornadoes, severe weather, and lightning [5].

KEY DATA AREAS

Six key areas to focus on include, data volume, data variety, data velocity, data variability, data veracity, and data complexity [4].

DATA VOLUME

Numerical Weather Prediction (NWP) is a big data issue, requiring some of the largest computations for real-time processing [4]. NWP ingests large amounts of observational data for assimilating into the models and solves the nonlinear Navier-Stokes equations on grids upwards of 100 million grid cells with time steps on the order of 20 s. This implies that on the order of 18 billion of each model variable is handled hourly. Of course, not all that data is stored, with about 30 output variables being stored at 15-minute increments and another 700 stored at hourly increments [4].

DATA VARIETY

The data that are combined to provide a forecast typically include several different types of observations, some of which sample the surface variables at convenient locations that rarely correspond with the NWP grid points, while others depict the atmospheric vertical profile at particular locations. Yet other variables are sampled on a horizontal grid but with differing elevations [4]. Even where such observations are gridded, they may not be on the same type of map projection as are the NWP data. This all means that interpolation is a necessary step of any forecast process. Satellite irradiance values often depict the cloud top temperature. Since different clouds appear at differing atmospheric levels, these are indicative of cloud top height [4].

Some satellite instruments look through the clouds to determine properties of the atmosphere in vertical profiles. While other instruments like, surface-based radars, scan the environment and provide reflectivity in terms of distance, angle, and azimuth of the beam [4]. Hence, all these differing types of data with differing types of grid systems, must be coordinated in order to provide a sensible picture of the current weather before it is implemented into the forecasting system [4]. The existence of standards and standardized formats for meteorological data, including metadata, significantly reduces the possibility of errors when processing disparate data [4].

One common standardization issue is the time stamp on the observations. It is common for specialized observations to be listed in local time. For such observations, it is sometimes confusing whether there is a time change when moving from standard to daylight savings time or vice versa. Another issue is averaging time of observations. There are many small networks of specialized weather observations called mesonets. Although some of these are standardized, not all follow standardization on reporting details and averaging periods [4].

For instance, one dataset that brings together observations from a variety of mesonets includes data with differing averaging periods, with hourly data including everything from averages of 10Hz data for the full hour, for fifteen minutes, for five minutes, for one minute, and even for an instantaneous value [4]. Some observations are recorded at the top of the hour, while others are an average of the prior hour. It is not a simple process to standardize the values that are provided. In using such data, it is important to read the information available on details of each type of observation and to be aware of best methods to deal with disparity [4].

DATA VELOCITY

Having large amounts of data arrive on disparate time scales creates a huge challenge to processing. For a full system to operate, one must prepare for different times of arrival for each NWP model and each source of observation. This means that as data arrives, it must be matched to its valid time and steps taken to account for any delays before merging it with data from other models, observations, or systems [4].

DATA VARIABILITY

The realities of receiving the different data types in real-time implies that one must be prepared for any of the data sources to be delayed and have plans for graceful degradation of predictions when certain sources of data are not available in time to provide the real-time prediction [4]. Because this is a frequent occurrence, fallback routines are necessary for each type of data that may be missing. Because the machine learning algorithms are trained to optimize on having all the data, it is necessary to also provide forecast model systems that assume missing data. Note that this process becomes even more complex when more than a single type of data is missing at the time that the forecast must be delivered [4].

DATA VERACITY

Data quality is a critical issue when training the computational intelligence models as well as in real-time. There are frequent issues with incorrect data that must be identified. For instance, there is a range of expected temperature values and when an observation is far from the expected range for the season and time of day at a location, it can be flagged for potential error [4]. An additional check could be made on the previous value of temperature to see if the change in time is within reason. For weather variables, however, one must consider that occasionally rapid changes or abnormal values may be real.

Temperature changes could occur rapidly upon frontal passage. There are occasional extreme values of each weather variable. For instance, during times of flooding, precipitation observations could appear abnormal when in fact, they are correct for that unusual case. This implies that quality control algorithms must be carefully constructed to identify these possibilities [4].

DATA COMPLEXITY

These issues of data volume, variety, velocity, variability, and veracity point to the difficulties of attempting to blend these data to provide accurate forecasts in real-time. For example, observations are used for at least three purposes in making the forecast: 1) training the computational intelligence algorithms, 2) identifying the current conditions to provide the necessary information for the current prediction, and 3) assimilation into the NWP models [4]. After the prediction is made, data are again required for verification and validation. How to use the different sources of data well for each of these purposes without compromising the other uses is challenging [4].

RELATED WORK

This area focuses on the work that has been done on weather forecasting using artificial neural networks (ANNs) [3] but will also be discussed later in this paper. In temperature forecasting one must distinguish between the times the forecast goes ahead, for example, temperature one hour ahead or the minimum and maximum temperature of a given day. Several works have been done and different artificial neural networks (ANN) models have been tested. Kaur [8] and Maqsood [7] described a model that predicts the hourly temperature, wind speed and relative humidity 24 hours ahead [3]. Training and Testing is done separately for the winter, spring, summer and fall seasons.

The authors have made a comparison of Multilayer Perceptron Networks (MLP), Elman Recurrent Neural Network (ERNN), Radial Basis Function Network (RBFN), the Hopfield Model (HFM) and ensembles of these networks [3]. MLP was trained by back propagation. RBFN has natural unsupervised learning. The authors have suggested one hidden layer and 72 neurons for the MLP network and 2 hidden layers with 180 neurons for RBFN as the optimal architecture [3]. The log-sigmoid is the activation function for the hidden layer unit of MLP network. In RBFN they use a Gaussian activation function and the output is pure line in both cases [3].

The accuracy measure used was the mean absolute percentage error (MAPE). RBFN had the best performance, while RBFN and MLP had about the same accuracy. However, the MLP learning process was more time consuming. During the winter and spring, humidity prediction had the lowest MAPE, while summer and fall temperature forecast performed the best [3].

The performance of ensembles did outperform all single networks. Two different types of ensembles were used: weighted average (WA) and winner takes all (WTA) [3]. WTA had the lowest MAPE and therefore the highest accuracy. The work focused on maximum and minimum temperature forecasting and relative humidity prediction using time series analysis. The network model used was a multilayer feedforward ANN with back propagation learning [3].

Direct and statistical input parameters and the period were compared [3]. For minimum and maximum temperature forecasting, the optimum appeared to be a fifteen-week period of input data. The input features were features of maximum and minimum. These features were moving average, exponential moving average, oscillator, rate of change and the third moment. For the fifteen-week period the error was less than three percent. The main result was that in general statistical parameters can be used to extract trends. Good parameters are moving average, exponential moving average, oscillator, rate of change and moments [3].

A three-layer MLP network with six hidden neurons, a sigmoid transfer function for the hidden layer and a pure linear function for the output layer was found to yield the best performance [3]. The scaled conjugate gradient algorithm was used for training. Wind speed, wind direction, dry bulb, temperature, wet bulb temperature, relative humidity, dew point, pressure, visibility, and amount of cloud, were the input parameters measured every three hours [3]. The other input parameters were measured daily: gust wind, mean temperature, maximum temperature, minimum temperature, precipitation, mean humidity, mean pressure, sunshine, radiation, evaporation [3].

Most of the approaches mentioned above use MLP networks. One approach used evolutionary neural networks in combination with generic algorithms to predict the maximum temperature per day [10]. The suggested input parameters are month, day, daily precipitation, max temperature, min temperature, max soil temperature, min soil temperature, max relative humidity, min relative humidity, solar radiation, and wind speed. The accuracy was 79.49 percent for a 2-degree error bound. Training was done by back propagation.

The author assumes that the accuracy could in fact, be increased by using a larger training set, different training algorithms and more atmospheric values. The most often used architecture was MLP and a recurrent architecture (RBFN) had been used, which generated pretty good results [10].

Machine Learning for Weather Forecasting

Next, we looked at machine learning. Machine learning is a branch of the artificial intelligence field and is a data science technique which creates a model from a training dataset. A model is basically a formula which outputs a target value based on individual weights and values for each training variable. In each record, corresponding weights (sometimes between 0 and 1) to each variable tells the model how that variable is related to the target value. There must be enough training data to determine the best possible weights of all the variables. When the weights are learned as accurately as possible, a model can predict the correct output, or the target value given a test data record [12].

Utilizing simple machine learning techniques allow us to be relieved from the complex and resource-hungry weather models of traditional weather stations. It has immense possibilities in the realm of weather forecasting. Such a forecasting model can be offered to the public as web services very easily [12].

Overview of Machine Learning-Based Strategies and Forecast Performance Factors

The main objective of the algorithms developed in this area is to obtain a mathematical model that fits the data [13]. Once this model represents accurately known data, it is used to perform the prediction using new data. In this way, the learning process involves two steps: the estimation of unknown parameters in the model, based on a given dataset, and the output prediction, based on new data and the parameters obtained previously [13].

In this way, machine learning strategies find models between inputs and outputs, even if the system dynamics and its relations are difficult to represent. For this reason, this approach has been widely implemented in a great variety of domains, such as pattern recognition, classification, and forecasting problems [13].

Common Methods Implemented in Machine Learning:

Supervised Learning, which has information of the predicted outputs to label the training set and is used for the model training [13].

Unsupervised Learning, which does not have information about the desired output to label the training data. Consequently, the learning algorithm must find patterns to cluster the input data [13].

Semi-supervised Learning, which uses labeled and unlabeled data in the training process [13].

Reinforcement Learning, which uses the maximization of a scalar reward or reinforcement signal to perform the learning process, being positive or negative based on the system goal. Positive ones are known as “rewards” while negative ones are known as “punishments” [13].

Figure 2 roughly illustrates how to choose between the main categories of machine learning. There are three main types of learning [1]: (a) Supervised Learning, when the training set is labeled (i.e., it contains the attribute that the model is trying to estimate); (b) Unsupervised Learning, when the training set is not labeled, and (c) Reinforced Learning, when the learned results lead to actions that change the environment [16].

Figure 2. Machine learning methods categorization [16].

Considering the large amount of ML-based approaches developed in forecasting applications, this work is focused on the most widely implemented ML strategies in temperature prediction: ANN and SVM. Although these methods are trained in a supervised way, neural network algorithms, capable of unsupervised training, could be included as well [13].

In particular, it is important to note that the most used input features in this field include the previous values of temperature as well as relative humidity, solar radiation, rain, and wind speed measurements On the other hand, the prediction evaluation measures, more frequently used in these works, to assess the performance of these algorithms, have included the Mean Absolute Percentage Error (MAPE), the Mean Absolute Error (MAE), the Median Absolute Error (MdAE), the Root Mean Squared Error (RMSE), and the Mean Squared Error (MSE) [13].

Other areas proposed in the literature, can be used as the correlation coefficient R (Pearson Coefficient), or the index of agreement d which are usually normalized in the (0 1) range [13]. These algorithms and their particularities will be discussed in the following subsections.

Neural Networks

A neural network [14] is a powerful data modeling tool that can capture and represent complex input and output relationships. The motivation for the development of neural network technology stemmed from the desire to implement an artificial system that could perform intelligent tasks like those performed by the human brain. Neural network resembles the human brain in the following two ways [14]:

A neural network acquires knowledge through learning.

A neural knowledge is stored within interneuron connection strengths known as synaptic weights.

The true power and advantages of neural networks lies in the ability to represent both linear and nonlinear relationships directly from the data being modeled [14]. Traditional linear models are simply insufficient when it comes for true modeling data that contains nonlinear characteristics. A neural network model is a structure that can be adjusted to produce a mapping from a given set of data to features of or relationships among the data [14].

The model is adjusted, or trained, using a collection of data from a given source as input, typically referred to as the training set. After successful training, the neural network will be capable to perform classification, estimation, prediction, or simulation on new data from the same or similar sources [14].

An Artificial Neural Network (ANN) [5] is an information processing model that is inspired by the way biological nervous systems, such as the brain, process information. The key component of this model is the new structure of the information processing system. It is composed of a huge number of highly interconnected processing elements (neurons) working in unison to solve specific problems [5]. Just like humans, artificial neural networks in fact learn by example, and is configured for a particular application, such as pattern recognition or data classification, through a learning process. Learning in biological systems adds adjustments to the synaptic connections that exist between the neurons [14].

Artificial neural networks are a computational approach in machine learning that simulate the human brain. They are used to analyze the problem through a learning process by connecting neurons in a multilayer structure and controlling the connection strength between individual neurons. There are several types of ANNs depending on the neuron modeling and connection methods. Among those types, is multilayer perceptron (MLP) [18].

The MLP is composed of three layers (input layer, output layer, and hidden layer between them); each layer contains interconnected nodes. In an MLP, nonlinear problems can be analyzed using the hidden layer and a nonlinear activation function. Learning is implemented using a back-propagation algorithm and the gradient descent method in which an update rule is applied to minimize the difference between the target and output values [15].

Numerical Weather Prediction

Numerical Weather Prediction (NWP), is the use of mathematical models to accurately predict the atmospheric behavior for a specific period. It represents the state of the atmosphere at a fixed time, such as temperature, wind components, and others over a discrete domain [6]. BRAMS is one of the most widely employed numerical models in regional weather centers in Brazil [6]. BRAMS, developed by the atmospheric science department at the Colorado State University, is a numerical weather prediction model used to simulate atmospheric conditions. The new developments integrated into BRAMS include modified computational modules to better simulate the tropical atmosphere [6].

Current Technologies

In this section, we looked at two popular companies and some of their technologies, Amazon web Services (AWS) and Mini-AWS, as well as Microsoft Azure.

Amazon Web Services (AWS): Mini-AWS

A Mini-AWS is a miniature weather station developed to overcome the drawbacks of the AWS [15]. The Mini-AWS is approximately seven times less expensive than the AWS (1,300 USD versus 9,000 USD) and requires low maintenance and repair costs. The installation site selection is hardly limited because it requires a very small space; hence, it can be installed in any place deemed suitable, ensuring the stable and steady collection of data. It can also be installed on a mobile object such as a vehicle. However, it is exposed to the external environment depending on the installation site and the collected observation data should be corrected to make them amenable for application in weather forecasting [15].

A Mini-AWS is essentially miniature weather station (dimensions: 157 mm 167 mm 34 mm) capable of measuring and recording air temperature, relative humidity, and atmospheric pressure (Figure 3). Its advantages over the AWS include low-cost installation and maintenance/repair and ease of installation in areas where the AWS installation is constrained for geographic and economic reasons. It can also be mounted on a vehicle and used as a mobile weather station because its precise position can be tracked using GPS (Global Positioning System) and GLONASS (Global Navigation Satellite System an alternate to GPS). Additionally, the power supply for its sensor and communication units can be automatically switched on and off based on the necessity to save energy [15].

Figure 3. Picture of a Mini-AWS

Microsoft Azure: D3 Express and Advanced

In 2008, Microsoft started its initiative in Cloud Computing with the release of Windows Azure at its annual professional developer conference [6]. The initial model was the platform as a service allowing developing and running applications written in the programming languages supported by the .NET framework. Since then, companies like, AccuWeather have adopted its platform to better forecast the weather.

In May 2017, AccuWeather launched AccuWeather D3 Express, a cloud-based analytics product that quantifies the impact of disruptive weather on a business using a rating of 1 (insignificant) to 10 (extreme). AccuWeather supplies historical weather from its data centers, using a Python script running on a Linux-based server to periodically load new files daily. This data is then loaded into Azure SQL Database using the bulk copy protocol (BCP) [9].

Customers upload their historical sales data using the D3 Advanced Historical Sales Upload Form that runs in an Azure App Service web app. An SQL Database serves as the data store for both AccuWeather weather data and the customer’s historical sales data, providing aggregation and cleansing [9]. AccuWeather uses Azure Machine Learning Studio to train models for each subscriber or customer based on historical weather and their provided sales data. Azure Data Factory generates updated sales forecasts based on changing weather forecasts and stores data in the SQL Database for the D3 Advanced Analytics Visualizations [9]. D3 Express combines several Azure services to move, store, and manage data and to present data to the web or API client; these services include Blob storage, Azure App Service, and Azure API Management [9].

As soon as D3 Express entered the market, AccuWeather began work on the next version, D3 Advanced, which delivers even more customized predictions using machine learning [9]. AccuWeather built D3 Advanced using Machine Learning and other Azure data services. AccuWeather D3 Advanced provides actionable insights based on an automated comparison of a customer’s unique sales history and AccuWeather historical actionable insights based on an automated comparison of a weather data. Businesses upload historical sales data for specific products and minutes later get spot analytics on a dashboard showing how sales of those products will be affected by specific weather conditions such as, wind, rain, humidity, and others [9].

AccuWeather’s D3 Platform is a suite of customized products to help businesses prepare for weather events that would impact operations and production [17]. The first is the D3 Express Platform, which features AccuWeather’s Impact Indicator™ uses 10 years of historical weather data to classify disruptive weather by event and magnitude. Using a custom proprietary algorithm developed by AccuWeather, they have created an impact value ranked on a scale of 1 (Insignificant) to 10 (Extreme) for how disruptive a weather event will be to a business. The impact, or risk value, considers the probability of a weather event occurring and the loss that the event caused at a location [17].

AccuWeather D3 Express lets you know when extremely disruptive weather events are forecasted for all locations so that the client can plan and stay on top of the latest weather developments, allowing time to strategize and meet anticipated demand, being ahead of the competition [17].

Training with Data

A vast amount of meteorological data is available that could be used for training of forecast models. The data handling system of European Centre for Medium-Range Weather Forecasts (ECMWF) provides access to over 210 Petabytes of primary data. The data archive of ECMWF grows by about 233 Terabytes per day [11]. If unfiltered observational data are used as input for networks, biases between different observation systems need to be addressed and networks need to be robust against missing or faulty input data [11].

It is also questionable how much data can be used, given the ever-evolving climate. This will also decrease the time frame for which data can be used for training since we cannot expect a model that is trained as a black box to provide reliable predictions if the underlying climate state has changed and if events that have never happened in the training data start to happen in the real world, such as an ice-free Arctic during summer. Conventional models show significant biases in long-term simulations. These biases will also be a problem for models based on neural networks and may change the local “climate” within a couple of days of simulations and push the network to weather regimes that were not covered by the training data [11].

Existing weather and climate models could be used to generate a shear unlimited amount of training data to train a forecast system based on neural networks also for a changing climate [11]. However, the quality of the existing models and the assimilation system would limit the quality of predictions with the neural network forecast system, reducing the advantage of the deep-learning approach. If it is possible to replicate the dynamics of the model in enough detail, more difficult tasks such as the use of real observations as input could be addressed [11].

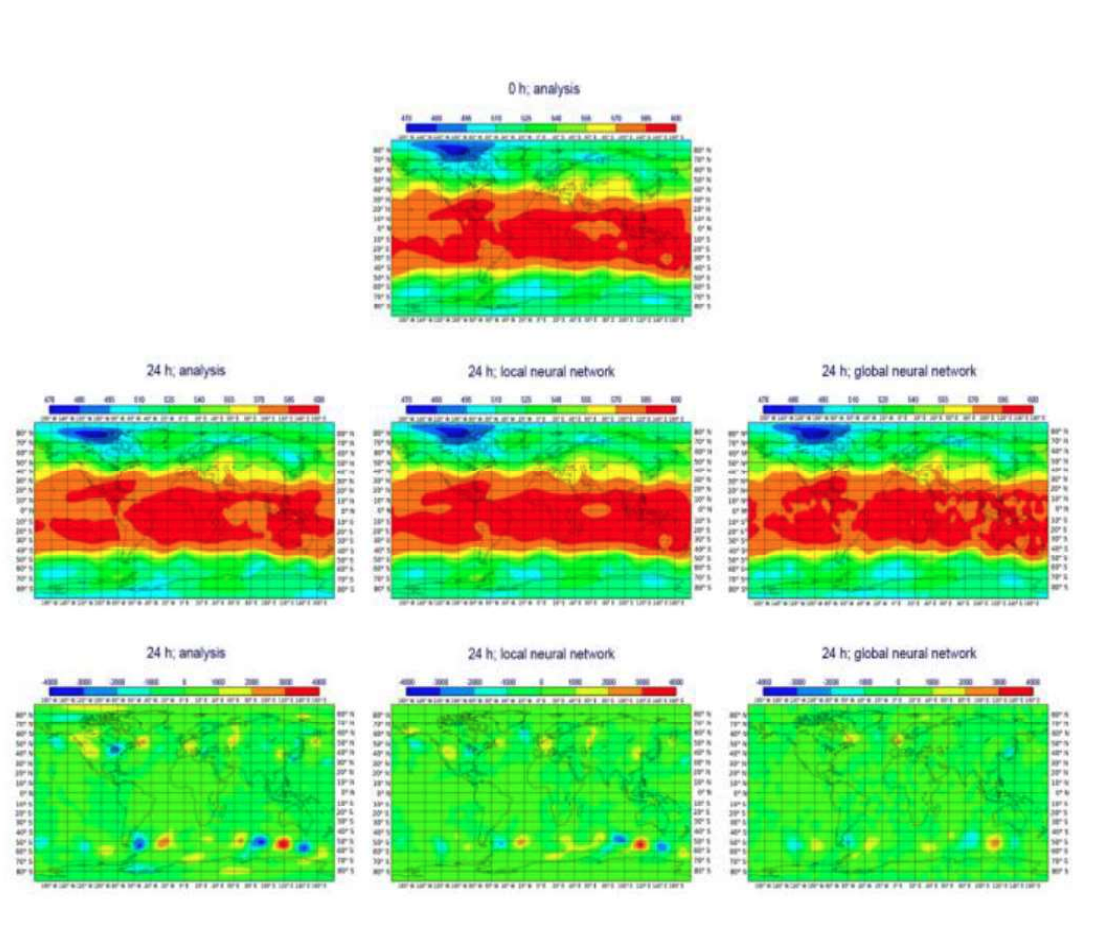

Figure 4. Top: Z500 from analysis on 1 March 2017 that is used as initial conditions for forecasts. Middle: Z500 for the analysis on 2 March for reference (left) as well as the local network and the global network configurations 1 day into the forecast (middle and right). Bottom: difference between Z500 for the analysis on 1 and 2 March (left) as well as the difference between initialization and after 24h for the local and the global network configuration (middle and right). The local network uses a 9 × 9 stencil and fixed polar regions.

The Future of Weather Forecasting

As technologies continue to grow and more data is being accumulated, the accuracy of weather forecasting will only continue to grow further. Therefore, continuing to study artificial intelligence, machine learning, and artificial neural networks is so important. This will not only help make better weather predictions, but better the way humans view the world as we know it.

Conclusion

By better understanding the current capabilities and advancements that Artificial Intelligence, Machine Learning, and Neural Networks have brought to humans’s understanding of the weather, we can create a better future. This paper focused on how these technologies have helped to take in more amounts of data and make more accurate predictions. Machine learning technology can provide intelligent models, which are much simpler than traditional physical models. They are less resource-hungry and can easily be run on almost any computer including mobile devices [3].

Weather forecasting is the application of science and technology to predict the state of the atmosphere for a given location. Weather forecasts are made by collecting quantitative data about the current state of the atmosphere and using scientific understanding of atmospheric processes to project how the atmosphere will evolve. The chaotic nature of the atmosphere, the massive computational power required to solve the equations that describe the atmosphere, error involved in measuring the initial conditions, and an incomplete understanding of atmospheric processes mean that forecasts become less accurate as the difference in current time and the time for which the forecast is being made increases [3].

There are a variety of end uses to weather forecasts. Weather warnings are important forecasts because they are used to protect life and property. Forecasts based on temperature and precipitation are important to agriculture, and therefore to traders within commodity markets. Temperature forecasts are used by utility companies to estimate demand over coming days. On an everyday basis, people use weather forecasts to determine what to wear on a given day [3].

Since outdoor activities are severely curtailed by heavy rain, snow and the wind chill, forecasts can be used to plan activities around these events. In order to predict weather in a very effective way and to help overcome all such problems using artificial intelligence, machine learning, and artificial neural network, is crucial. The advantages which these technologies have over other weather forecasting methods is that they can minimize the error using various algorithms and gives us a predicted value which is nearly equal to the actual value [3].

Machine learning models can predict weather features accurately enough to compete with traditional models. They can also utilize the historical data from surrounding areas to predict weather of an area. It shows that it is more effective than considering only the area for which weather forecasting is done [12].

The data mining techniques that blend the models and the observations are also becoming more complex. For instance, deep machine learning is demonstrating some success in predictions in many fields. But such methods require yet more data. Again, it will be interesting to watch the balance between data volume and its smart usage develop over time [4].

Machine learning techniques and analog ensembles critically depend on archived data for training and sampling purposes. Considering the volume of model output and observation data sets, a subset of essential variables must be selected and archived at appropriate frequency for future use [4].

Because big data is continuing to grow, weather forecast predictions are getting much more intricate. However, due to the advancements of technologies like artificial intelligence, machine learning, and neural networks, these predictions have become much faster in recent years. The accuracy of weather forecasts will continue to improve, and weather models will continue to be refined [9]. The observational networks that supply weather data are expanding, but no matter how complex weather models become, meteorologists will always make forecasting errors. This is because instruments used to measure weather-related variables will always be imperfect. Earth’s atmosphere is highly complex and will always be challenging to fully simulate in a weather model [9]. As artificial intelligence, machine learning, and deep neural networks continue to get better, so will the accuracy of weather forecaster predictions.

REFERENCES

McGovern, Amy, et al. "Using artificial intelligence to improve real-time decision making for high-impact weather." Bulletin of the American Meteorological Society 98.10 (2017): 2073-2090.

Cho, Dongjin, et al. "Comparative Assessment of Various Machine Learning-Based Correction Methods for Numerical Weather Prediction Model Forecasts of Extreme Air Temperatures in Urban Areas." Earth and Space Science 7.4 (2020): e2019EA000740.

Abhishek, Kumar, et al. "Weather forecasting model using artificial neural network." Procedia Technology 4 (2012): 311-318.

Haupt, Sue Ellen, and Branko Kosovic. "Big data and machine learning for applied weather forecasts: Forecasting solar power for utility operations." 2015 IEEE Symposium Series on Computational Intelligence. IEEE, 2015.

Kuligowski, Robert J., and Ana P. Barros. "Localized precipitation forecasts from a numerical weather prediction model using artificial neural networks." Weather and forecasting 13.4 (1998): 1194-1204.

Carreño, Emmanuell D., et al. "Performance Analysis of a Numerical Weather Prediction Application in Microsoft Azure." Memory (GB) 14.56: 56.

Imran Maqsood, Muhammad Riaz Khan and Ajith Abraham. “An ensemble of neural networks for weather forecasting, Neural Computer & Application” (2004) 13: 112-122.

Amanpreet Kaur, J K Sharma, and Sunil Agrawal. "Artificial neural networks in forecasting maximum and minimum relative humidity" International Journal of Computer Science and Network Security, 11(5):197-199, May 2011.

"Businesses Predict Weather Impact Using Cloud-based Machine Learning." Microsoft Customers Stories, customers.microsoft.com/it-it/story/accuweather-partner professional-services-azure. Accessed 14 Dec. 2020.

Caltagirone, Sergio. "Air Temperature Prediction Using Evolutionary Artificial Neural Networks". Master's thesis, University of Portland College of Engineering, 5000 N. Willamette Blvd. Portland, OR 97207, 12 2001.

Dueben, Peter D., and Peter Bauer. "Challenges and design choices for global weather and climate models based on machine learning." Geoscientific Model Development 11.10 (2018): 3999-4009.

Jakaria, A. H. M., Md Mosharaf Hossain, and Mohammad Ashiqur Rahman. "Smart weather forecasting using machine learning: a case study in Tennessee." arXiv preprint ar Xiv:2008. 10789 (2020).

Cifuentes, Jenny, et al. "Air temperature forecasting using machine learning techniques: a review." Energies 13.16 (2020): 4215.

Baboo, S. Santhosh, and I. Kadar Shereef. "An efficient weather forecasting system using artificial neural network." International journal of environmental science and development 1.4 (2010): 321.

Ha, Ji-Hun, et al. "Error correction of meteorological data obtained with Mini-AWSs based on machine learning." Advances in Meteorology 2018 (2018).

Ribeiro, Mauro, Katarina Grolinger, and Miriam AM Capretz. "Mlaas: Machine learning as a service." 2015 IEEE 14th International Conference on Machine Learning and Applications (ICMLA). IEEE, 2015.

AccuWeather Data Driven Decisions, d3.accuweather.com/Home/About. Accessed 15 Dec. 2020.

![Figure 2 Machine learning methods categorization [16].](https://images.squarespace-cdn.com/content/v1/659f5eec7e94cc7c76b91bdc/7631b0e7-c86b-4871-8425-003d1e508f42/Figure+2.png)